A third of 13 to 17-year-olds have seen footage of real-life violence on social media platform TikTok in the past year, research suggests.

The poll of 7,500 teenagers for Home Office-backed charity the Youth Endowment Fund found a quarter had seen similar material on Snapchat, 20% on YouTube and 19% on Instagram.

Researchers found that across all social media platforms, the most common type of violent material viewed was footage of fights, with nearly half of the children polled (48%) having seen such clips.

Just over a third, 36%, had seen threats to beat someone up, while 29% had viewed people carrying, promoting or using weapons.

The survey also found more than a quarter (26%) of 13 to 17-year-olds had seen posts showing or encouraging harm to women and girls.

Asked how they had come across the material, 27% said the platform they were using had suggested it, while only 9% said they had deliberately accessed it.

Half said they saw it on someone else’s feed, while the remaining third said it had been shared with them.

Jon Yates, executive director at the Youth Endowment Fund, which works to reduce youth violence, said: “Social media companies need to wake up. It is completely unacceptable to promote violent content to children.

“Children want it to stop. Children shouldn’t be exposed to footage of fights, threats or so-called ‘influencers’ peddling misogynistic propaganda.

“This type of content can easily stoke tension between individuals and groups, and lead to boys having misguided and unhealthy attitudes towards girls, women and relationships.

“As a society, we have a duty to help children live their lives free from violence, both offline and online.”

A TikTok spokesperson said: “TikTok removes or age-restricts content that’s violent or graphic, most often before it receives a single view, and provides parents with tools to further customise content and safety settings for their teens’ account.”

A Snapchat spokesperson said: “Violence has devastating consequences and there is no place for it on Snapchat.

“When we find violent content we remove it immediately, we have no open newsfeed and the app is designed to limit opportunities for potentially harmful content to go viral.

“We encourage anyone who sees violent content to report it using our confidential in-app reporting tools.

“We work with law enforcement to support investigations and partner closely with safety experts, NGOs and the police to help create a safe environment for our community.”

A YouTube spokeswoman said the site has strict policies prohibiting violent content, and quickly removes material that violates its policies, with more than 946,000 videos taken down in the second quarter of 2023.

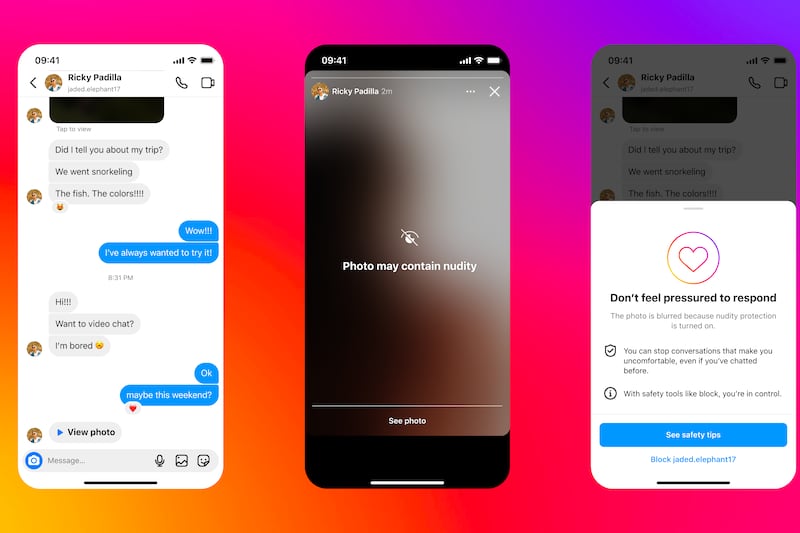

Instagram has been approached for comment.

– 7,574 children (aged 13 to 17) in England and Wales were surveyed online between May and June.